Experiments demonstrate that our proposed methods are easy to adopt, stable to train, and highly effective especially on complex compression models.

In addition, beyond the fixed integer quantization, we apply scaled additive uniform noise to adaptively control the quantization granularity by deriving a new variational upper bound on actual rate. We thus propose a novel soft-then-hard quantization strategy for neural image compression that first learns an expressive latent space softly, then solves the train-test mismatch with hard quantization. The other two methods do not encounter this mismatch but, as shown in this paper, hurt the rate-distortion performance since the latent representation ability is weakened. Training with additive uniform noise approximates the quantization error variationally but suffers from the train-test mismatch. Join the hundreds of thousands of photographers and designers who use Gigapixel AI for better printing, cropping, restoration, and more. Use deep learning to get better photo quality by enhancing detail.

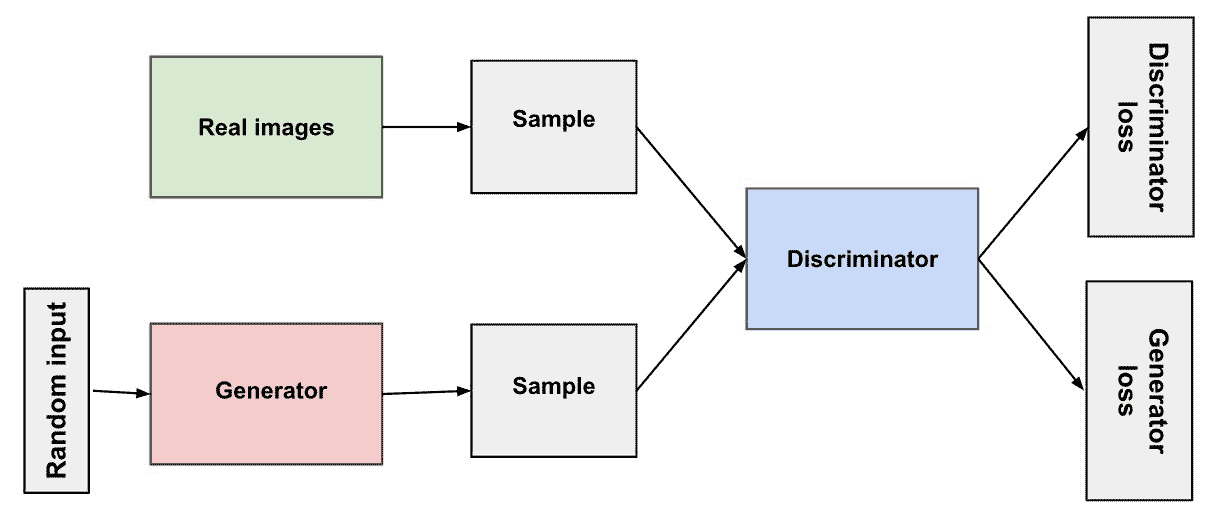

For neural image compression, end-to-end optimization requires differentiable approximations of quantization, which can generally be grouped into three categories: additive uniform noise, straight-through estimator and soft-to-hard annealing. Exceptional AI image upscaler delivering enhanced detail and resolution by. Quantization is one of the core components in lossy image compression.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed